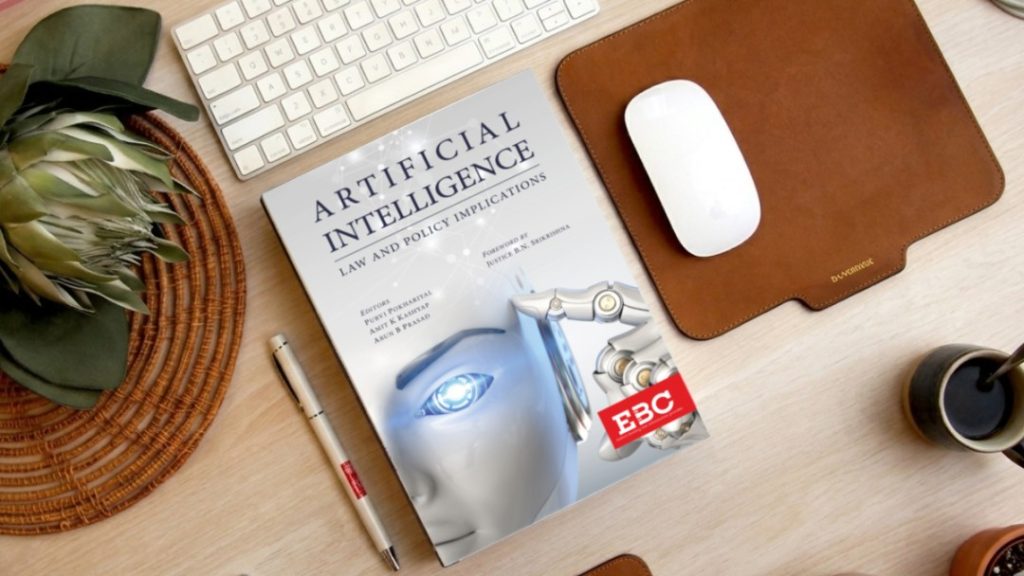

Artificial Intelligence (AI) is rapidly transforming industries across the world. From AI chatbots and virtual assistants to self-driving cars and automated healthcare systems, AI tools are becoming deeply integrated into everyday life. However, as AI systems become more powerful, an important legal and ethical question arises:

Who is responsible when AI causes harm — the developer or the user?

This question has become central to modern discussions around AI liability laws, data privacy, cybersecurity, and technology regulation. Governments and legal experts are now working to define accountability in cases involving AI-generated misinformation, biased decisions, copyright infringement, financial losses, or physical harm.

In this blog, we will explore AI liability laws, the responsibilities of developers and users, major legal challenges, and the future of AI regulation.

What Are AI Liability Laws?

AI liability laws are legal rules that determine who is responsible when an artificial intelligence system causes damage, harm, or legal violations.

These laws may apply to situations involving:

- Incorrect medical recommendations from AI tools

- Self-driving car accidents

- AI-generated false information

- Copyright violations from AI-generated content

- Discriminatory hiring algorithms

- Financial losses caused by automated systems

- Privacy and data misuse

Since AI systems often make autonomous or semi-autonomous decisions, assigning legal responsibility can become complicated.

Why AI Liability Is Becoming Important

AI technology is advancing faster than many existing laws. Traditional legal systems were designed for human actions, not autonomous machine behavior.

As AI adoption grows, governments and companies are increasingly concerned about:

- Consumer protection

- Data privacy

- Cybersecurity risks

- Algorithmic bias

- Intellectual property rights

- Workplace automation

- Public safety

This has led to growing discussions around AI governance and legal accountability.

Understanding Developer Responsibility in AI

AI developers are the individuals or companies that design, train, test, and deploy AI systems.

In many situations, developers may be held responsible if the harm occurs because of:

1. Negligent Design

If an AI system is poorly designed or lacks proper safety measures, developers may face legal liability.

Example:

A self-driving car company releases software with known safety issues that later cause accidents.

2. Biased Training Data

AI systems trained on biased or discriminatory data may produce unfair outcomes.

Example:

An AI hiring tool discriminates against certain groups due to biased historical training data.

3. Lack of Transparency

Some AI systems operate as “black boxes,” meaning users cannot understand how decisions are made. Regulators increasingly demand explainable AI systems.

4. Failure to Warn Users

Developers may be responsible if they fail to clearly communicate limitations, risks, or proper usage instructions.

5. Data Privacy Violations

If AI systems misuse personal data or fail to protect sensitive information, developers may violate privacy laws.

Understanding User Responsibility in AI

Users also have responsibilities when using AI tools and systems.

A user may be held accountable if they:

1. Misuse AI Technology

Using AI for illegal activities such as fraud, hacking, deepfake scams, or misinformation can lead to legal consequences.

2. Ignore Usage Guidelines

If users operate AI systems outside recommended safety conditions, they may share liability.

Example:

A company ignores warnings while using AI medical software for unauthorized purposes.

3. Publish Harmful AI Content

Users who intentionally distribute harmful or defamatory AI-generated content may be legally responsible.

4. Over-Rely on AI Decisions

Humans are often expected to exercise judgment instead of blindly following AI recommendations.

Example:

A financial advisor relying entirely on AI-generated investment advice without human review.

Shared Liability: When Both Developer and User Are Responsible

In many cases, AI liability may be shared between developers and users.

For example:

- A developer creates flawed AI software.

- A user ignores safety warnings and misuses the system.

- Harm occurs because of both actions.

Courts may divide responsibility depending on the circumstances, similar to product liability laws in other industries.

Major Legal Challenges in AI Liability

AI liability laws are still evolving, and several legal challenges remain unresolved.

1. Lack of Clear Global Regulations

Different countries are developing different approaches to AI regulation.

For example:

- The European Union focuses heavily on AI risk classification and transparency.

- The United States currently uses a sector-based regulatory approach.

- Many countries are still drafting AI governance frameworks.

This creates legal uncertainty for global businesses.

2. Difficulty Proving Fault

AI systems often make decisions through complex algorithms that are difficult to interpret.

Determining whether harm was caused by:

- the developer,

- the training data,

- the user,

- or the AI system itself

can be legally challenging.

3. Autonomous Decision-Making

Modern AI systems can learn and adapt over time, making their actions less predictable.

This raises questions such as:

- Should AI systems have separate legal status?

- Can companies fully control AI behavior?

- Who is liable when AI behaves unexpectedly?

4. Copyright and Intellectual Property Issues

AI-generated content has created new legal debates around:

- ownership,

- copyright infringement,

- and fair use.

For example, lawsuits involving AI-generated art, music, and writing are increasing globally.

AI Liability Laws Around the World

Different governments are actively developing AI-related laws.

European Union AI Act

The EU AI Act is one of the world’s most comprehensive AI regulations. It categorizes AI systems based on risk levels and imposes strict requirements on high-risk AI applications.

United States AI Regulation

The U.S. currently regulates AI through existing consumer protection, privacy, and anti-discrimination laws, while discussions continue around federal AI legislation.

India and Emerging AI Policies

India is also developing AI governance frameworks focused on responsible AI, digital safety, and innovation support.

How Companies Can Reduce AI Liability Risks

Businesses using AI should adopt responsible AI practices.

Conduct AI Risk Assessments

Companies should regularly test AI systems for bias, safety, and compliance.

Maintain Human Oversight

Critical decisions should include human review instead of relying solely on automation.

Improve Transparency

Organizations should clearly explain how AI systems work and how decisions are made.

Strengthen Data Protection

Protecting user data and following privacy laws is essential for legal compliance.

Use Ethical AI Practices

Responsible AI development helps reduce reputational and legal risks.

The Future of AI Liability Laws

AI regulation is expected to become stricter in the coming years.

Future trends may include:

- Mandatory AI transparency requirements

- Stronger consumer protection laws

- Increased accountability for high-risk AI systems

- International AI governance standards

- Clearer rules for AI-generated content and copyright

Governments, businesses, and technology experts are likely to continue debating how to balance innovation with public safety.

Frequently Asked Questions (FAQs)

1. What are AI liability laws?

AI liability laws determine who is legally responsible when an AI system causes harm, damages, or legal violations.

2. Are AI developers legally responsible for AI mistakes?

Developers may be responsible if the issue results from poor design, biased training data, weak security, or lack of safety measures.

3. Can AI users also face legal consequences?

Yes. Users can be held responsible if they misuse AI systems, spread harmful content, or ignore usage guidelines.

4. What is shared liability in AI?

Shared liability occurs when both the developer and the user contribute to the harm caused by an AI system.

5. Which countries are creating AI regulations?

The European Union, United States, India, China, and several other countries are actively developing AI governance and liability frameworks.

6. Why is AI regulation difficult?

AI systems are complex, adaptive, and sometimes difficult to explain, making it challenging to assign responsibility clearly.

7. How can businesses reduce AI legal risks?

Businesses can reduce risks through ethical AI practices, human oversight, transparency, regular audits, and strong data protection policies.

For a deeper understanding, you can refer to this resource.